STORYGOLD

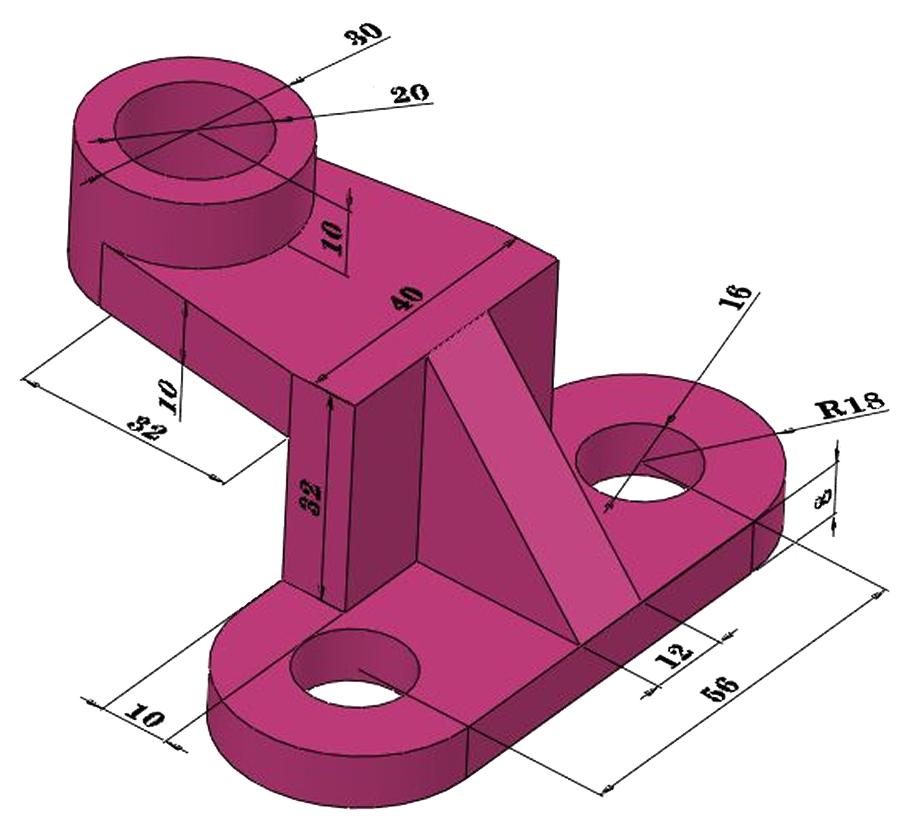

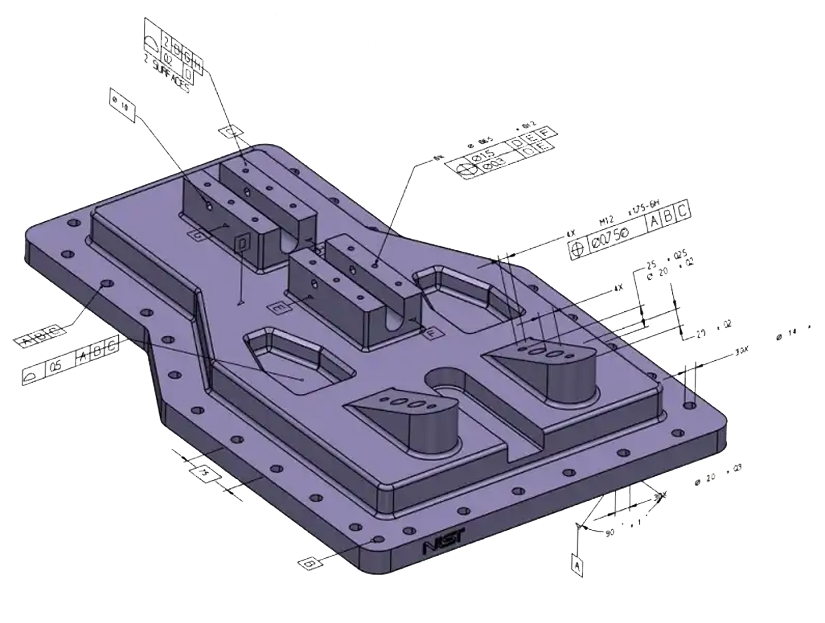

foundation model for parametric CAD

We've got lots of experience building foundation models at Waymo and Google

LoRA Fine-Tuning for Vision-Language Models

LoRA is touted as being as effective as full-fine tuning, but is it true? LoRA fine-tuning performs significantly worse than full fine-tuning for vision-language models learning spatial reasoning on CAD data, suggesting LoRA's low-rank assumption fails when teaching models genuinely new capabilities far from their pre-training distribution. This potentially means that effective CAD model training requires full fine-tuning with 100,000+ examples and substantial GPU resources, rather than the more efficient LoRA approach that works well for tasks closer to the model's existing knowledge.

How good is GPT-5 at 3D?

GPT-5 can write syntactically perfect code with the right prompting techniques, but when it comes to actually understanding 3D space and geometry, even OpenAI's most advanced model falls dramatically short of specialized fine-tuned systems. This reveals a critical insight: you can't teach a model to truly "see" and reason about 3D space; some capabilities require fundamentally different training approaches that go far beyond clever prompting. In this post, we present an empirical analysis on GPT-5's performance on parametric CAD generation.

We believe that building AGI requires intelligence that can understand the 3D world