Low-Rank Adaptation (LoRA) has become a widely adopted technique for fine-tuning large language models, offering significant computational savings by updating only a small fraction of model parameters. The authors of the original LoRA paper have found that fine-tuning works even with a rank as low as 1 or 2.

However, our recent experiments with vision-language models on a parametric CAD dataset reveal a critical limitation.

Setup

We conducted fine-tuning experiments on a CAD dataset using two different vision-language model (models that can take in text and image concurrently as input).

- LLaVA 1.6 (34B parameters): We compared full fine-tuning against LoRA-based fine-tuning

- GPT-4 (1T+ parameters): Fine-tuned using an undisclosed method (likely LoRA given the number of parameters and the fact that OpenAI suggests using hundreds or low thousands of data points)

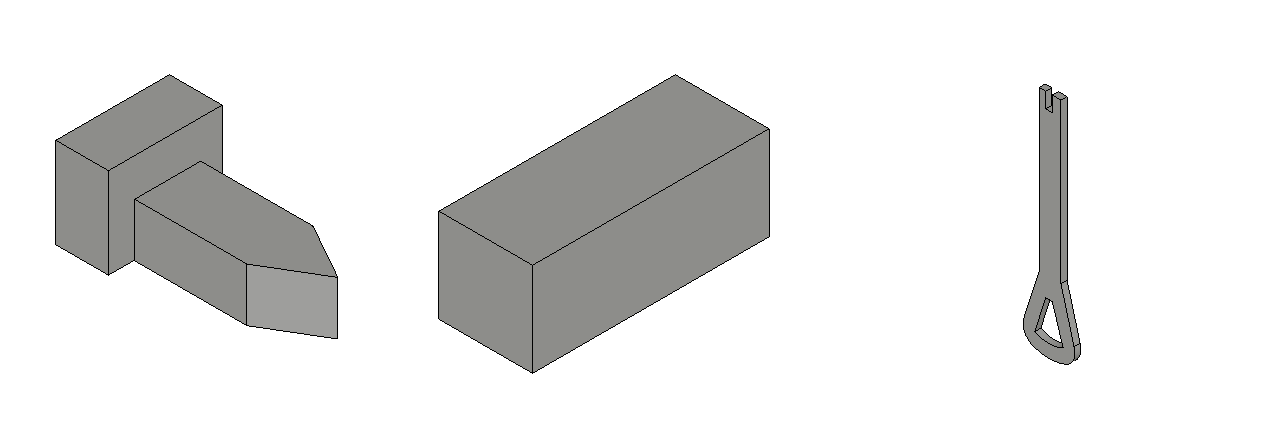

The task required models to develop spatial reasoning capabilities and geometric understanding. These are domains significantly different from their original training distributions. (Some examples pictured below.)

Evaluation Metric

We employed Intersection-over-Union (IoU) as our primary metric for geometric accuracy. IoU measures the overlap between predicted and ground-truth geometric regions:

IoU = Area of Overlap / Area of Union

This metric provides a robust quantitative assessment of spatial prediction accuracy, with values ranging from 0 (no overlap) to 1 (perfect agreement).

Results

The performance gap between fine-tuning approaches was substantial:

| Model | Fine-tuning Method | Geometric Accuracy (IoU) |

|---|---|---|

| LLaVA 1.6 34B | Full fine-tuning | 0.75 |

| LLaVA 1.6 34B | LoRA fine-tuning | 0.37 |

| GPT-4 | ?? Fine-tuning | 0.49 |

| GPT-4 | Baseline | 0.43 |

For LLaVA 1.6 34B, LoRA fine-tuning is 51% worse compared to full fine-tuning!! (0.37 vs 0.75)

Not Low-Rank

LoRA operates under the assumption that fine-tuning updates to pre-trained weights have low intrinsic rank. In other words, the required weight changes can be well-approximated by low-rank matrices. This assumption holds well for tasks within the model's existing capability distribution, such as domain adaptation or instruction following.

However, learning spatial reasoning and 3D geometric understanding most likely requires high-rank updates. The new task is just too different from what's in the model's original training set.

The 1 Trillion Parameter Paradox

Despite having approximately 30× more parameters than LLaVA 1.6, GPT-4 achieved far lower geometric accuracy (0.49 vs 0.75). While we cannot definitively determine OpenAI's fine-tuning methodology, if GPT-4 was fine-tuned using LoRA (as suggested by API-based fine-tuning limitations), it makes sense that there was little improvement as we saw with LLaVA.

Implications

These findings have important implications for StoryGold as we aim to train the world's best AI foundation model for generating parametric, editable 3D models.

- Much more data needed: Full fine-tuning requires approximately 100,000+ high-quality training examples. It's multiple orders of magnitude more than what LoRA requires (1000s to 10,000s).

- Nontrivial compute requirements: We will need to train on many of the most expensive GPUs (A100/H100+ GPUs), so that's something we need to budget for.

- Data is a moat: Since a parametric CAD dataset that approximates the difficulties of professional CAD design does not exist, anyone who builds such a dataset will be able to train the frontier model.

- Open source models are superior: We find that open source models actually outperform proprietary models that are considered "the best" in other domains (GPT, Claude, etc.) which means we can train using open-source models and release our models to the open-source community as well.

Conclusion

While LoRA remains valuable for efficient adaptation in many scenarios, our results demonstrate that it should not be considered universally equivalent to full fine-tuning. For applications requiring models to develop genuinely new capabilities, particularly in spatial reasoning, geometric understanding, or other domains not commonly seen in the pre-training distribution, full fine-tuning will likely be necessary.

As an aside, we feel our finding underscores the value of open-source model development, which enables researchers to make informed decisions about fine-tuning strategies, understand model limitations, and maintain full control over the training process.