We conducted a quick and dirty evaluation of GPT-5, OpenAI's latest frontier model, and its capabilities on parametric CAD code generation. While GPT-5 represents significant progress toward more general AI systems, our experiments reveal limitations in its ability to perform spatial reasoning tasks, similar to its predecessor, GPT-4. We tried some simple techniques like retrieval-augmented generation (RAG) on the CadQuery API documentation and few-shot learning.

Experimental Design

We evaluated multiple configurations of GPT models on our parametric CAD generation benchmark:

- GPT-5 (baseline): Zero-shot generation

- GPT-5 + Few-shot: Prompted with 3-4 high-quality example image-code pairs

- GPT-5 + RAG: Augmented with retrieval from CadQuery API documentation

- GPT-5 + RAG + Few-shot: Combined approach using both techniques

- GPT-4 (baseline): Previous generation model for comparison

- BELLA v1.0 (13B and 34B): Our fine-tuned models for reference

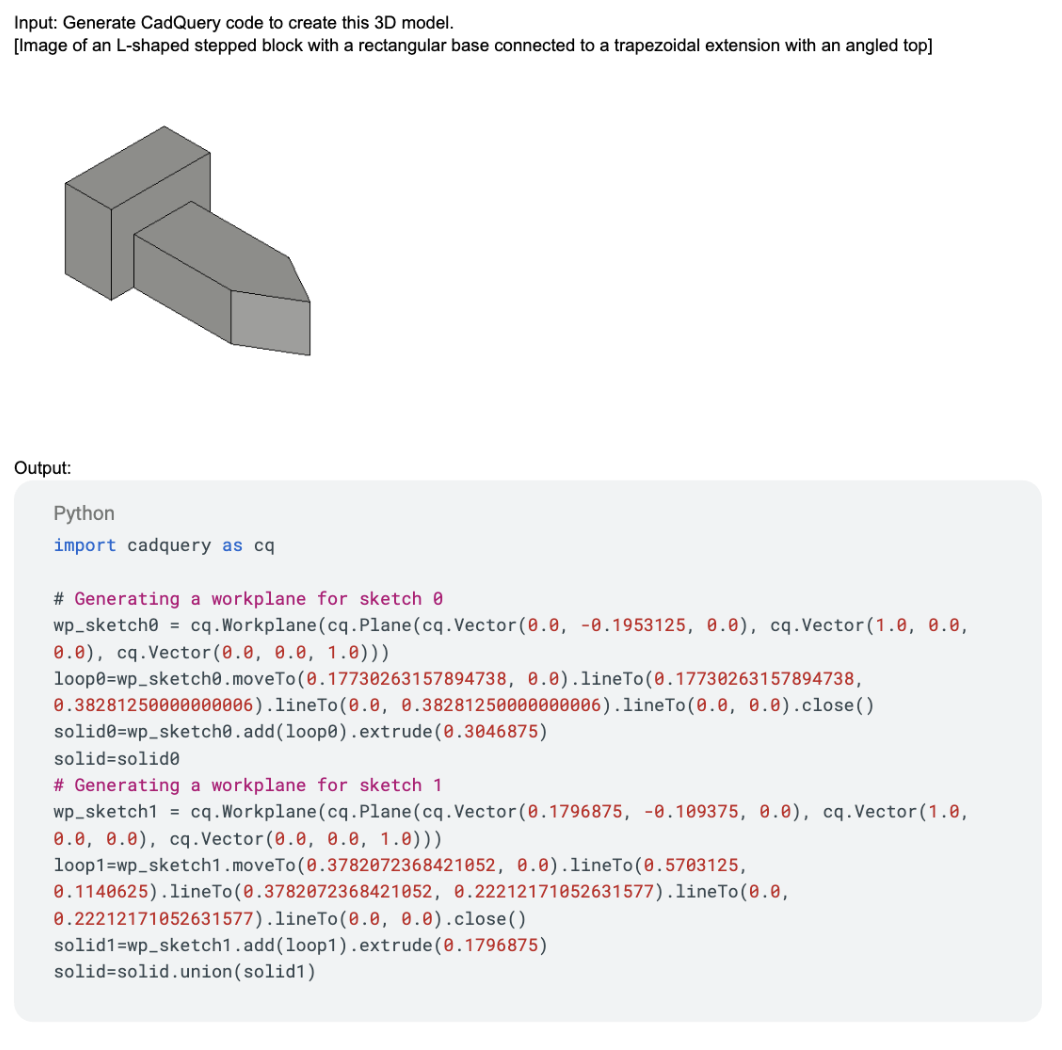

An example of a few-shot prompt:

We measured two key metrics:

- Code Validity Rate: The percentage of generated Python CadQuery code samples that compile and produce a valid solid without errors

- Intersection-over-Union (IoU): Geometric accuracy of successfully compiled outputs, measured against ground truth models (scale from 0 to 1.0, where 1.0 represents perfect geometric agreement)

Results

| Model | Validity % | IoU Score |

|---|---|---|

| BELLA v1.0 34B | 99% | 0.75 |

| BELLA v1.0 13B | 98% | 0.73 |

| GPT-5 + RAG + Few-shot | 98% | 0.49 |

| GPT-5 + RAG | 92% | 0.48 |

| GPT-5 + Few-shot | 90% | 0.47 |

| GPT-5 | 89% | 0.43 |

| GPT-4 | 93% | 0.43 |

The combination of RAG and few-shot learning dramatically improved GPT-5's code validity rate from 89% to 98%. Basically, knowing about the CadQuery API and a few examples of how to use it produced an 82% reduction in syntax and API errors (from 11% failures to 2% failures). In some ways, this is not surprising, as GPT-5 is exceptionally good at coding tasks, as shown by the myriad of codegen tools that are widely adopted.

Despite the substantial improvement in code validity, geometric accuracy remained largely unchanged. GPT-5's IoU score improved only marginally from 0.43 (baseline) to 0.49 (RAG + few-shot), whereas BELLA v1.0 (fine-tuned using a CadQuery dataset) sits at 0.75 IoU.

What does this mean? Well, generating syntactically correct code is fundamentally different from generating three-dimensional, geometrically accurate code! RAG and few-shot learning can teach a model correct API usage patterns, but they cannot teach deep spatial awareness!

Analysis

Most likely, trying to teach a model something that deviates far away from its pre-training data -- like spatial reasoning -- requires expensive fine-tuning or even specialized architecture trained from scratch. It's also possible that LLaVA's vision-language architecture may be better suited for geometric tasks than GPT's text-centric architecture, even before fine-tuning anything.

While GPT-5 represents impressive progress in general AI capabilities, our experiments demonstrate that frontier-scale models augmented with RAG and few-shot learning still cannot match specialized, fine-tuned models on 3D spatial reasoning tasks.